Top Fake ID Websites Unveiled Numerous websites selling counterfeit IDs have emerged in 2024, all guaranteeing top-notch quality and authenticity. Among this vast array of platforms,...

Prompt engineers build and implement systems that enable quick data and info delivery. To become successful in this field, you’ll need a strong educational background in...

Creating the perfect team is critical for LLM-powered applications. But how do you find them? This article will explore the key elements of assembling a qualified...

Are you looking for an efficient way to talk with customers? Sexting AI chatbots has the potential to transform your business. This guide will show you...

A Sexting Messages is a very traditional and classic way to have phone sex in a discreet and pleasurable way. In fact, despite the passage of time and...

I’m building an offshore tech team for AI software development to tap into global talent and cut costs. With AI transforming industries, having a dedicated team...

Ever feel like a cat at a dog show? That was me, the day I stumbled onto the fascinating world of AI prompt engineering. With no...

Python, as a programming language, has revolutionized the world of information technology (IT) as we know it for the last three decades. It has helped evolve...

Launching a startup is an exciting journey filled with creativity and innovation. As you embark on this path, one of the most crucial tasks is creating...

Improve marketing with data through the seamless integration of AI technologies, that will open new marketing possibilities and boost existing campaigns, customer engagements, and data automation....

So, you’re considering using Chat GPT for your university assignment and you might be worried about whether your university can detect it. Chat GPT is an...

Enhance your creative and productive capabilities with a user-friendly, AI-driven photo editing application designed to simplify your photo editing process.

Artificial Intelligence (AI) is rapidly transforming the landscape of the modern workplace in this year. Just think of Midjourney, ChatGPT, and LLama. Serving as a powerful...

As I dive into the realm of the Free Undress AI App, I am keen to uncover its true colors and unravel its potential. With an...

Undress AI is a popular virtual wardrobe app that helps users organize their clothing items and plan their outfits. The app allows you to upload photos...

Our website offers several expert-recommended strategies for successful crypto day trading. These strategies include technical analysis using indicators like TradingView and the Stochastics RSI, using a...

It’s a system which allows people to automate their crypto trades, often through platforms like bitcoin360ai.com, where investors can set rules for buying and selling. Then,...

AI crypto trading bots automate the buying and selling of positions based on technical indicators in the cryptocurrency market, which saves time and can improve performance....

The gambling world is highly affected by rising technologies. One of the most thrilling recent advancements is integrating AI and crypto into the online gambling sector....

AI Tools can generate high-quality digital human model images that help fashion brands reduce waiting time, save cost, and improve diversity. n an industry where trends...

Character.AI prioritizes maintaining respectful and appropriate interactions. To achieve this, the Character AI NSFW filter is designed to block any unsuitable or ethically questionable chats. This...

Key Takeaway: Discover the power of the Transformer Neural Network Model in deep learning and NLP! In this section, we’ll dive into the background of neural...

As the world prepares to enter the Fourth Industrial Revolution, educational models are at a crossroads. The arrival of a new wave of technological advancement, led...

Employee engagement is essential for the growth of any organization. An engaged workforce is likely to put in its best efforts toward meeting organizational goals and...

What is the best image recognition app? Is there an app that can find an item from a picture? Google Goggles: Image-Recognition Mobile App. The Google Goggles app is an image-recognition mobile app that uses...

Numerous enterprises are starting to explore the realms of machine learning and artificial intelligence (AI). Yet, for the majority embarking on this transformative voyage, the outcomes...

Stochastic Parrot is a concept in Machine Learning which focuses on using stochastic models to replicate human speech patterns. It’s a powerful tool for various applications...

Chinchilla AI by DeepMind is a revolutionary tech that’s taken the world by storm, presented in April 2022. It’s transforming how we think of AI and...

Introducing MMLU: the superhero of ML understanding! This groundbreaking approach revolutionizes language processing, enabling machines to comprehend and interpret human languages. Massive Multitask Language Understanding (MMLU)...

MLOps is a special discipline linking machine learning and operations. It focuses on simplifying the machine learning cycle, from development to deployment and maintenance. MLOps orchestrates...

Unless you have been living under a rock, you will have seen news and opinions about the growth of AI in recent months. Whether it is...

With the advent of technology, society is facing some groundbreaking and life-changing tools that can reshape our reality as we know it. When we are talking...

I had a great time watching Apple’s presentation this year. Judging by my Twitter feed, the apple company’s new platform made a huge impression on many...

Pornpen.ai provides adult AI-generated images, allowing users to quickly find what they need and easily customize the images. With our paid Pro mode, you can easily...

Artificial Intelligence (AI) is rapidly growing in popularity and importance, and its impact can be seen in many different areas of our lives. With the increasing...

Struggling with the ChatGPT? Don’t worry! We have your back. This article will guide you on how to make an app with the ChatGPT (OpenAI API),...

To access Midjourney Discord, you will need an invitation link or be invited directly by a member of the Discord server. Here are the steps to...

AI-powered writing tools can be of great help to anyone who wants to improve their writing skills and the quality of texts they create. However, you...

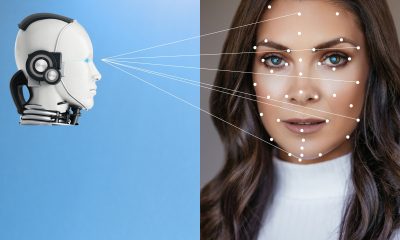

Anxious about your secrecy and protection? AI Facial Recognition and IoT are transforming our lives. It is vital to comprehend how this technology works and the...

Introduction: Briefly introduce ChatGPT Plus and what it offers. Features of ChatGPT Plus: Discuss the features and benefits of upgrading to ChatGPT Plus, such as access...

Welcome to my blog, where I discuss the latest developments in chatbot technology and its application to coding! Whether you’re a beginner or an experienced developer,...

Quantum AI is a new system for trading bitcoin that has become popular recently. Many robots can help you trade automatically, but Quantum AI seems to...

Bitcoin Ifex 360 AI is an online automated trading bot to trade cryptocurrency. It has been the best investment traders can do for massive profit returns....

Struggling to build a successful AI team? You’re not alone! It’s possible to create a reliable and cost-efficient offshore AI team. We’ll show you how! Here...

genei is a AI research tool enabling you to improve productivity by using a custom ML algorithm to summarise articles, analyse research and find key information,...

Here is a list of examples of how ChatGPT can be used in marketing that I found useful and actionable. – ECommerce Hacks: Create a revenue-driven...

Searching for the perfect analytical and AI services for your property? Look no further! Here you’ll find the latest mathematical algorithms, predictive analytics, machine learning and...

You’ve definitely seen these impressive illustrations on Twitter, Reddit and popular tech tabloids. What is MidJourney? MidJourney can transform any imagination into art from text. Some AI-generated art...

Have you ever been sick of posting the same old selfies on Instagram? Do you want to shake things up a little bit? FaceMagic is here...

Everybody can make DeepFakes to support ukrainians without writing a single line of code. In this story, we see how image animation technology is now ridiculously...

Recent Comments