Startup

DALL-E: Creating Images from Text – Try Online

Craiyon to try for free online – https://huggingface.co/spaces/dalle-mini/dalle-mini

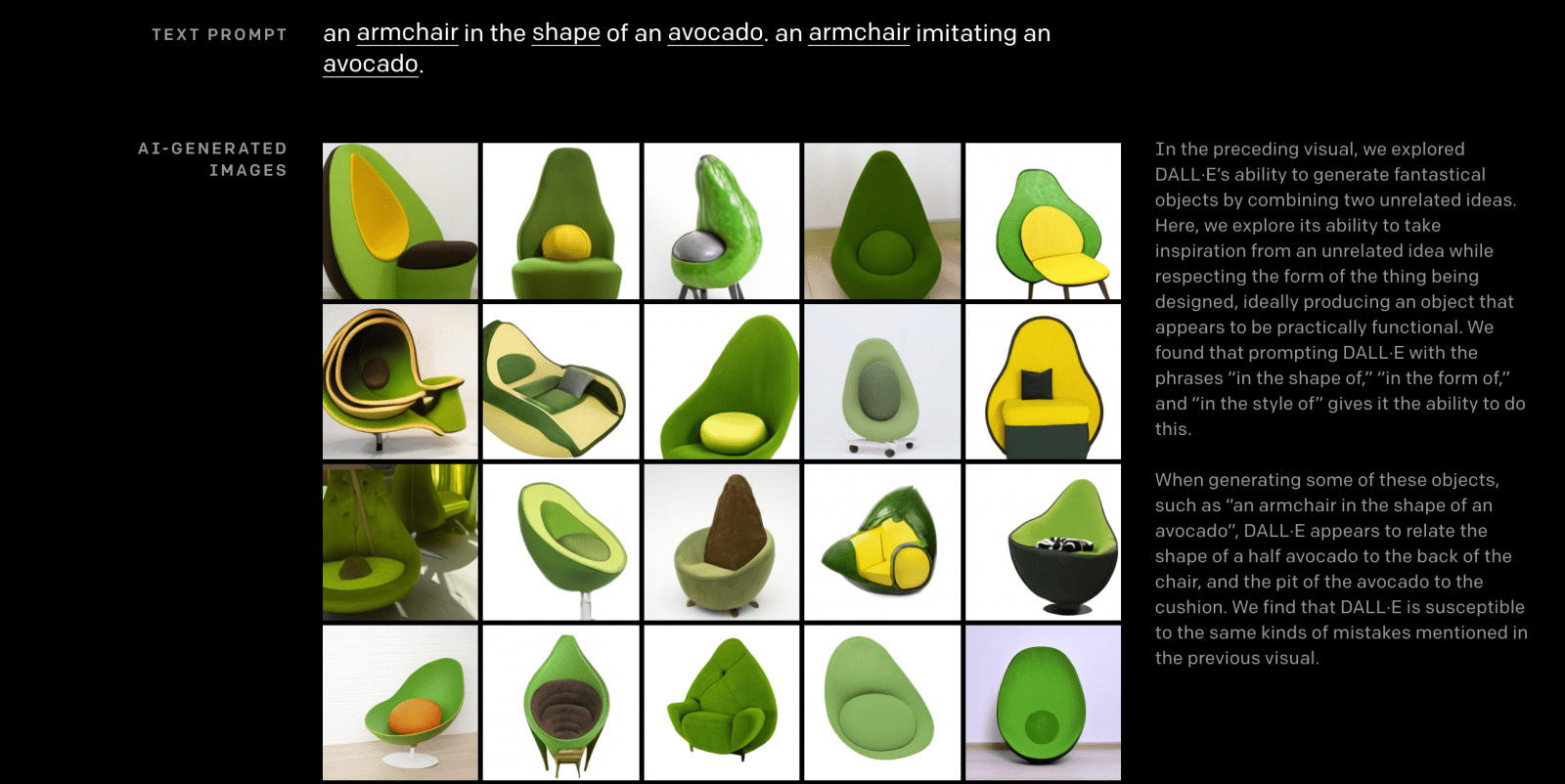

Open AI Company shared the results of their research, which I can not call anything other than magic – DALL · E, a new neuron, a continuation of the GPT-3 idea on transformers, but this time for generating images from text.

Generative Pre-trained Transformer 3 is an autoregressive language model released in 2020 that uses deep learning to produce human-like text. Initial release date: June 11, 2020. Number of parameters: 175 billion

I often write here about fantasy, they say, I fed Harry Potter to the neuron and received illustrations of all the scenes of the book – it seems this is no longer a fantasy, but they still don’t give me anything to dig deeper.

DALL · E neuron with 12 billion parameters, trained in picture-text pairs, its tasks:

- Synthesize pictures by text description

- Draw pictures with a part at the input, taking into account the text description

Open AI has already teased some things in this area before, and finally it has reached such a level that the jaw is falling off, look at the examples that I have attached, at the top of the text that was given at the input.

I’m sure she won’t be allowed to play for everyone yet.

I foresee this research will greatly affect many areas and industries, as the applications are endless.

- More details here: https://openai.com/blog/dall-e/

- Try Craiyon (ex Dalle-mini) online here https://www.craiyon.com/

Your Own AI Designer in 1 click

A fiction that has already become a reality. You tell the computer (in words!) what you want to see in a picture, and it draws it.

One more small step for GPT-3 and a huge leap for the design industry. You describe a photo/illustration in words and voila (so far 256×256). In short, there will be more good design around in the next 10-15 years. Designer’s work will become much more interesting (a lot of routine will go away).

This is the client’s paradise: “play with fonts”, “enlarge the logo”, “highlight in red” – all this is readily done by a mentally balanced machine.

On the other hand, unusual illustrations for children’s books, for example, are also welcome.

What profession to master in order not to be left behind in 10 years?

Read here our Free Guide how to use Dall-e tool.

-

Manage Your Business1 hour ago

Manage Your Business1 hour agoTOP 10 VoIP providers for Small Business in 2024

-

Cyber Risk Management4 days ago

Cyber Risk Management4 days agoHow Much Does a Hosting Server Cost Per User for an App?

-

Outsourcing Development4 days ago

Outsourcing Development4 days agoAll you need to know about Offshore Staff Augmentation

-

Software Development4 days ago

Software Development4 days agoThings to consider before starting a Retail Software Development

-

Edtech1 hour ago

How to fix PII_EMAIL_788859F71F6238F53EA2 Error

-

Grow Your Business4 days ago

Grow Your Business4 days agoThe Average Size of Home Office: A Perfect Workspace

-

Solution Review4 days ago

Top 10 Best Fake ID Websites [OnlyFake?]

-

Business Imprint4 days ago

How Gaming Technologies are Transforming the Entertainment Industry