I found a 5000-character limit to Google Translate, which is very surprising. The popular service now lets users translate texts of only 5,000 characters in one go,...

How Fast Crawling and Indexing Site with Millions of Pages? Recently, I often hear questions about the rapid embedding in the index of large sites. And today in the...

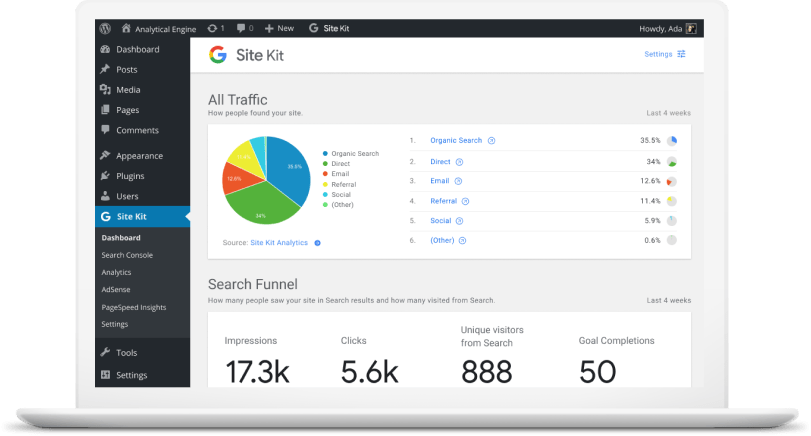

Meet Google Site Kit is the latest analytics/SEO plug-in developed by Google for WordPress web development.

Googelecom – Google’s web-based store is a cool place to buy products. By using the “find a store near me” feature, you can search according to...

In the ever-changing digital landscape of rising PPC costs and growing competition, maximizing the efficiency of your marketing budget has become more important than ever. In...

Google launched a new phone . . . but that’s not the real news. Let me explain. Let’s be real. Unless Google built a physical rocket...

Recent Comments