News

How to make a Java Web Crawler?

This post is for those who want to step-by-step learn how to create their own Crawler in Java. A web-crawler is considered by many to be a complex application requiring deep knowledge. The guide will guide you through several simple steps to quickly master the creation of Web Crawler. You can change the creation of the scanner using Java using this textbook for your needs after spending a little time.

Given:

– You need to know the basics of Java, a bit about the SQL database and MySQL.

– Computer or remote server.

Unknown:

Web Crawler for collecting information on a given logic.

Choose goals:

For example, let’s collect all the information on the site of your university “BigBrain.edu” on the topic “apples”.

Progress:

1. The algorithm of the searcher’s work consists in the following steps:

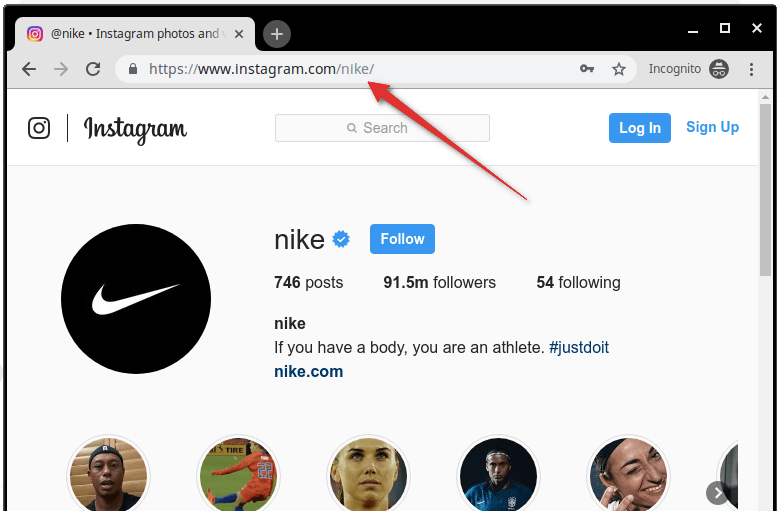

– Open the root web page (“BigBrain.edu”) and collect there all the external links from this page. To do this we will use JSoup, which is a convenient and simple Java library for analyzing HTML.

– Then we analyze the received URL-addresses and collect new links.

– When performing the second and subsequent steps, we check which page was processed earlier so that each URL is processed only once. It is for this control that we need a database.

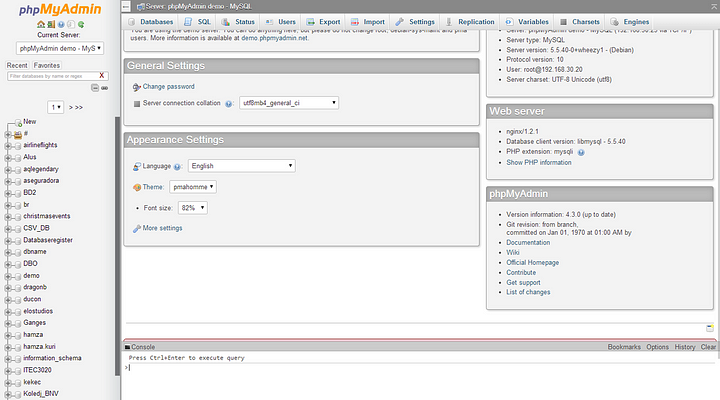

2. Configuring the MySQL database

Owners of Ubuntu and a guide for Windows users.

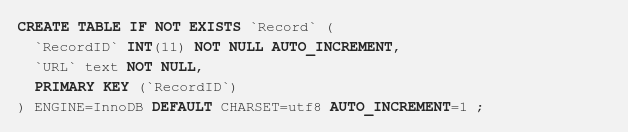

2.1. Create a database and table

Create a database named “Crawler” and create a table called “Record”, as shown below:

3. Run the crawling using Java

1) Download the main JSoup library from http://jsoup.org/download.

Download the file mysql-connector-java-xxxbin.jar from http://dev.mysql.com/downloads/connector/j/

2) Now create the project in its eclipse with the name “JavaCrawler” and add the jar jsoup and mysql-jar files that you uploaded to the Java Build Path.

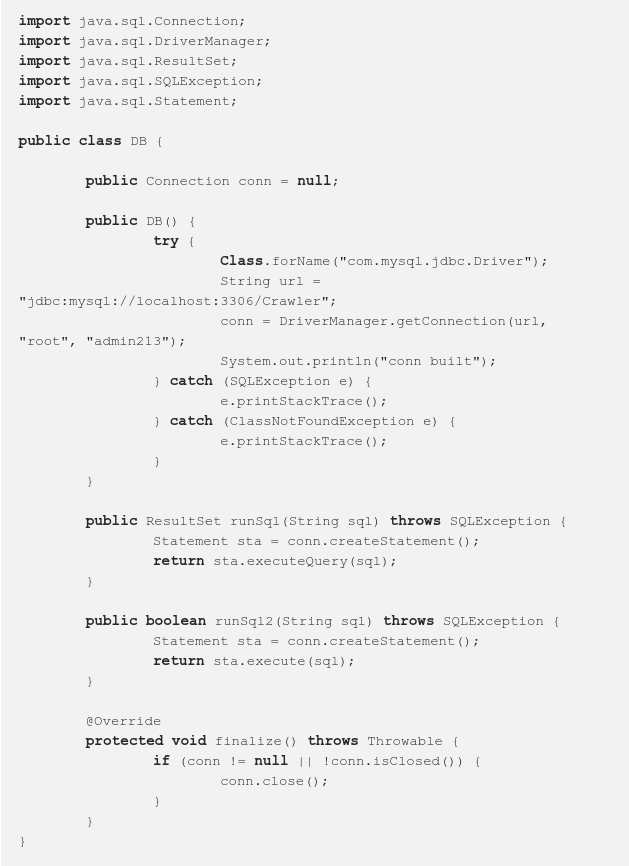

3) Create a class called “JCDB”, which is used to handle database actions.

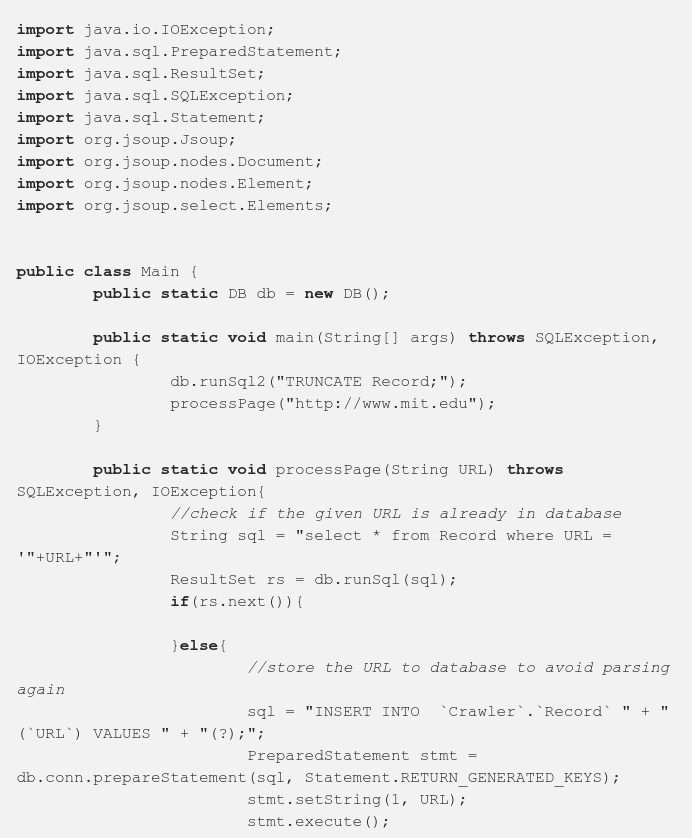

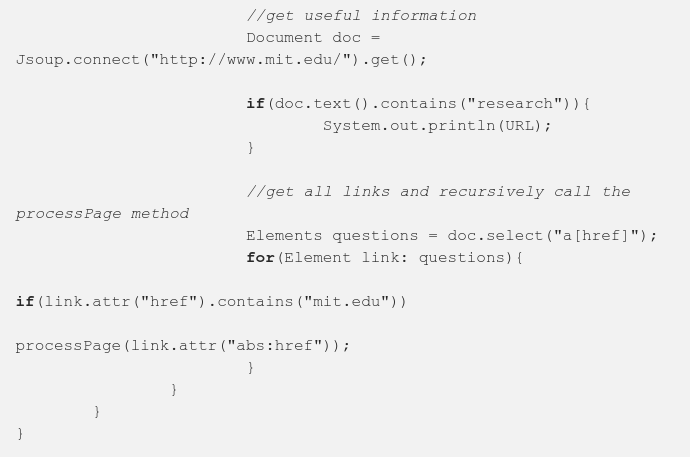

4) Create a class with name “Main” which will be our crawler.

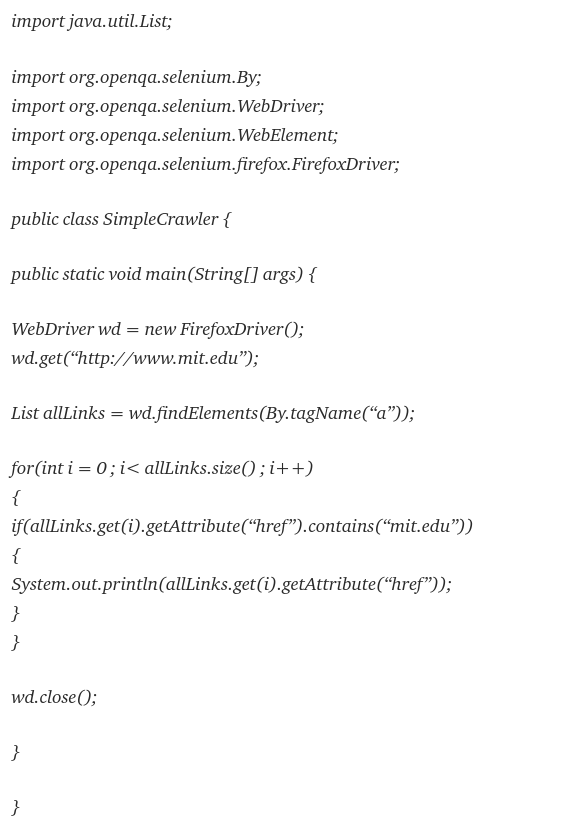

Recommend to use Selenium to crawl a web below code by open Firefox:

How develop custom web crawler on Python for OLX? You can do it by guide from Adnan.

-

Cyber Risk Management2 days ago

Cyber Risk Management2 days agoHow Much Does a Hosting Server Cost Per User for an App?

-

Outsourcing Development2 days ago

Outsourcing Development2 days agoAll you need to know about Offshore Staff Augmentation

-

Software Development2 days ago

Software Development2 days agoThings to consider before starting a Retail Software Development

-

Grow Your Business2 days ago

Grow Your Business2 days agoThe Average Size of Home Office: A Perfect Workspace

-

Solution Review2 days ago

Top 10 Best Fake ID Websites [OnlyFake?]

-

Business Imprint2 days ago

How Gaming Technologies are Transforming the Entertainment Industry