News

3D from Image

ML 3D reconstruction from a single RGB image

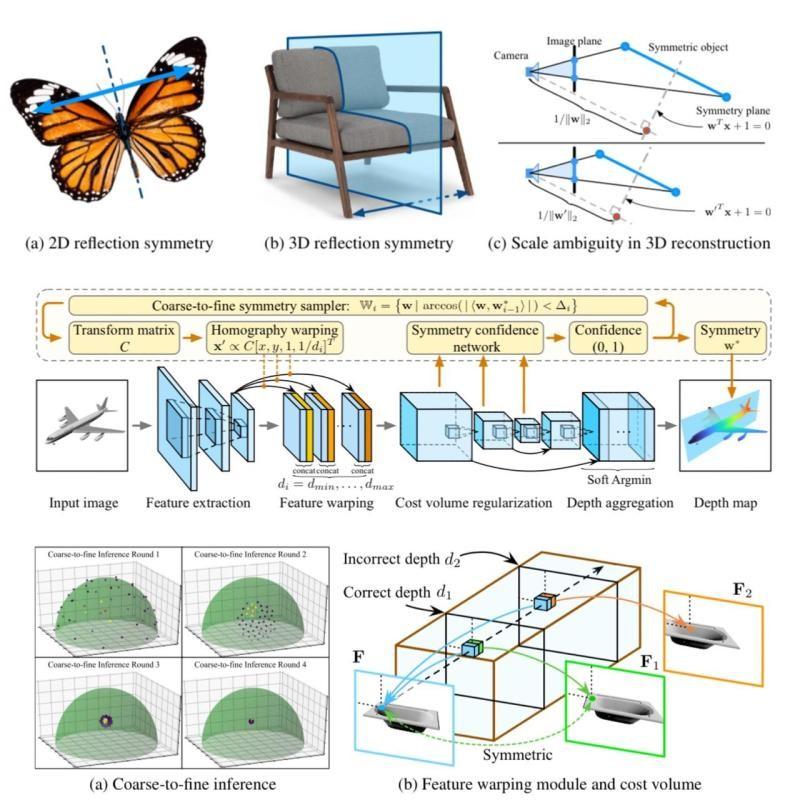

SymmetryNet is a geometry-based end-to-end deep learning framework that detects the plane of reflection symmetry and uses it to help the prediction of depth maps by finding the intra-image pixel-wise correspondence.

Geometry-based learning framework to detect the reflection symmetry for 3D reconstruction.

Experiments on the ShapeNet dataset show that this reconstruction method significantly outperforms the previous state-of-the-art single-view 3D reconstruction networks in terms of the accuracy of camera poses and depth maps.

Learning to Detect 3D Reflection Symmetry

for Single-View Reconstruction

3D reconstruction from a single RGB image is a challenging problem in computer vision. Previous methods are usually solely data-driven, which lead to inaccurate 3D shape recovery and limited generalization capability. In this project, developers focus on object-level 3D reconstruction and present a geometry-based endto-end deep learning framework that first detects the mirror plane of reflection symmetry that commonly exists in man-made objects and then predicts depth maps by finding the intra-image pixel-wise correspondence of the symmetry.

This method fully utilizes the geometric cues from symmetry during the test time by building plane-sweep cost volumes, a powerful tool that has been used in multiview stereopsis.

To our knowledge, this is the first work that uses the concept of cost volumes in the setting of single-image 3D reconstruction. Developers conduct extensive experiments on the ShapeNet dataset and find that our reconstruction method significantly outperforms the previous state-of-the-art single-view 3D reconstruction networks in term of the accuracy of camera poses and depth maps, without requiring objects being completely symmetric.

Code is available at https://github.com/zhou13/symmetrynet.

by Steve Nouri

Other Transform images into 3D models Tools

Search Results

Web results

- Selva3D

- All3DP

- Embossify

- Smoothie 3D

- Insight3d

-

Cyber Risk Management3 days ago

Cyber Risk Management3 days agoHow Much Does a Hosting Server Cost Per User for an App?

-

Outsourcing Development3 days ago

Outsourcing Development3 days agoAll you need to know about Offshore Staff Augmentation

-

Software Development3 days ago

Software Development3 days agoThings to consider before starting a Retail Software Development

-

Grow Your Business3 days ago

Grow Your Business3 days agoThe Average Size of Home Office: A Perfect Workspace

-

Solution Review3 days ago

Top 10 Best Fake ID Websites [OnlyFake?]

-

Business Imprint3 days ago

How Gaming Technologies are Transforming the Entertainment Industry

-

Gaming Technologies21 hours ago

Gaming Technologies21 hours agoHow to Set Up Text-to-Speech for Channel Points on Twitch